WHAT ACTUALLY BREAKS IN IP FABRICS AT SCALE

When we start working with new customers, we often hear a familiar statement: “Our data center is built on a Spine Leaf IP Fabric.”

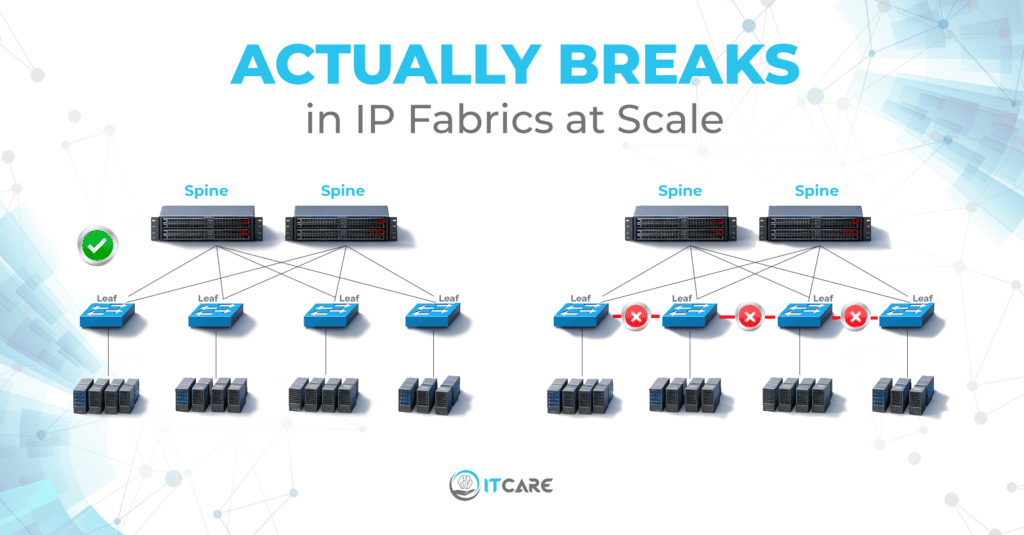

However, once we perform a network assessment, reality sometimes looks different. A common pattern we encounter is the presence of direct links between leaf switches. While this may appear harmless during initial deployments, it violates one of the most fundamental principles of a traditional IP fabric design.

In a clean architecture, leaf switches should connect only to spines, with symmetrical and consistent connectivity across the fabric. Predictability is the entire purpose of the model. The moment leaf to leaf links are introduced, traffic patterns become less deterministic, and subtle problems begin to surface.

One of the most frequent side effects involves ECMP behavior. Under real production traffic, unexpected forwarding decisions, asymmetry, and troubleshooting complexity start to appear. What worked in a small environment becomes fragile at scale.

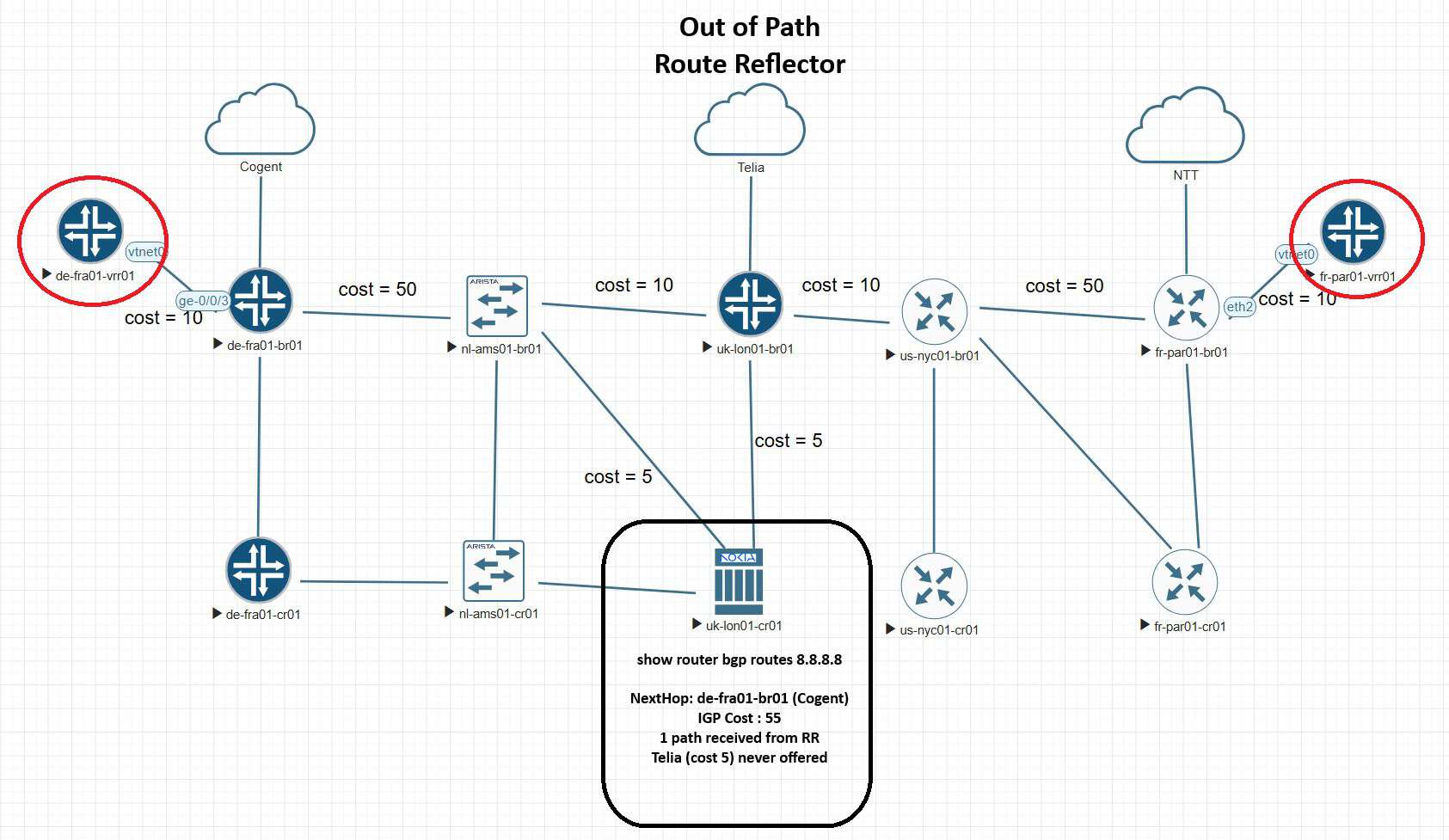

Another critical design aspect that is often underestimated is ASN allocation. Decisions related to underlay and overlay BGP sessions must be made on day zero. Changing this later in a live fabric is operationally expensive and risky. Even if DCI is not part of the initial scope, future expansion should always be considered.

Hardware consistency also plays an important role. Mixing platforms across layers may work functionally, but it reduces operational predictability, which is precisely what IP fabrics are designed to achieve.

IP fabric architectures reward discipline. Small deviations from design principles tend to create disproportionately large operational challenges over time.

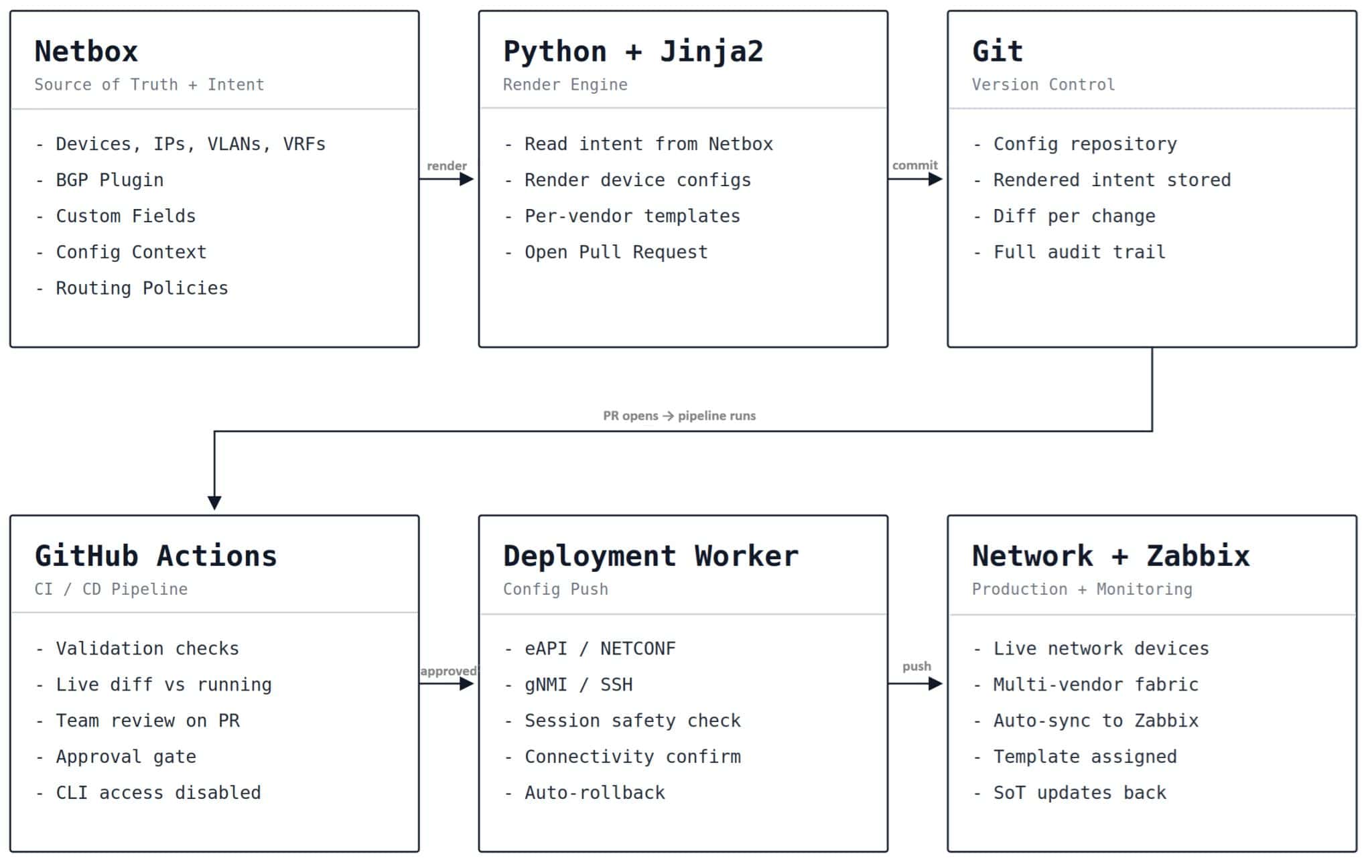

This is why careful planning and experienced engineering matter long before the first production packets flow.

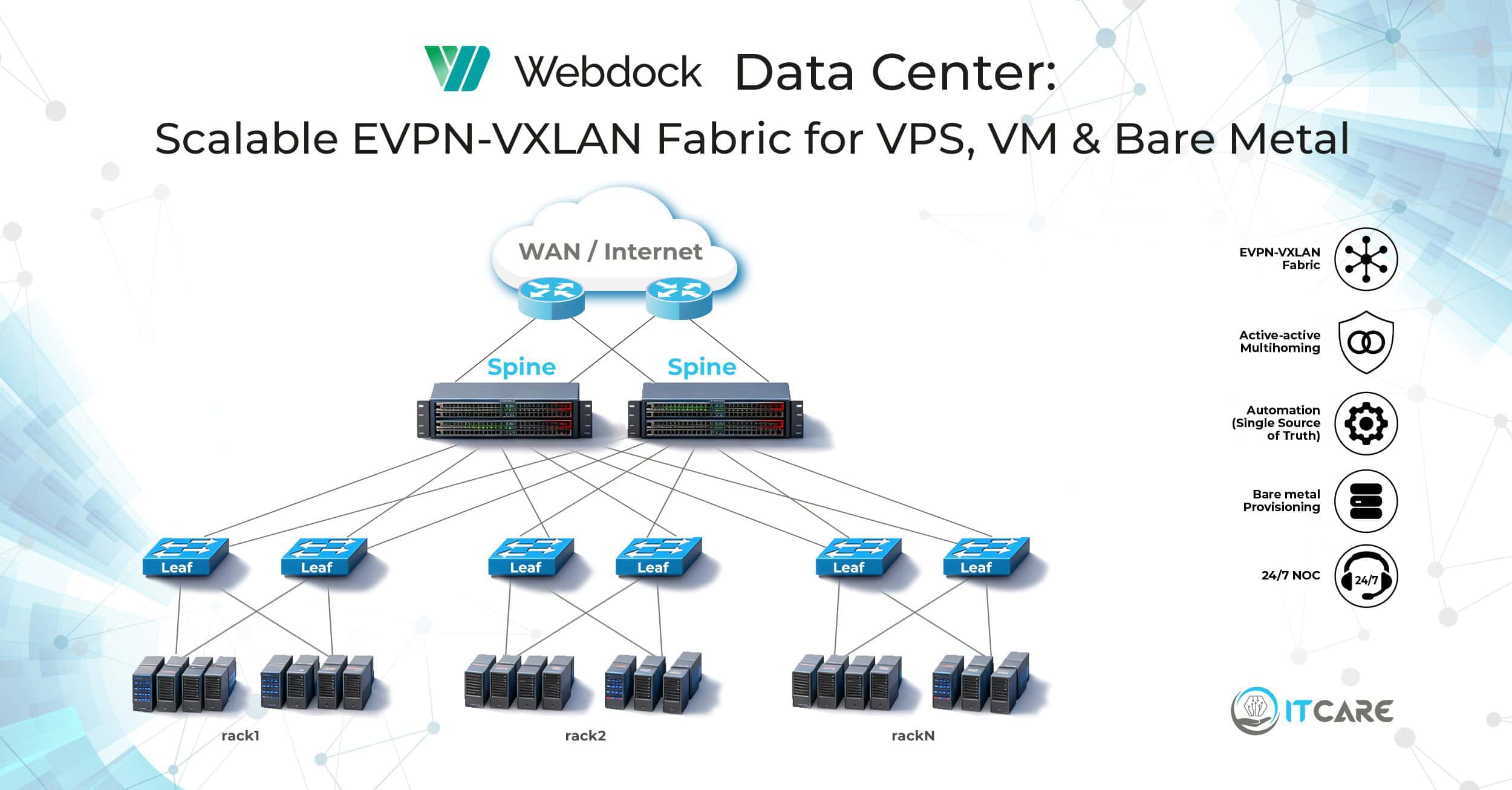

For an example of EVPN-VXLAN in a production data center build, see our Webdock case study

#IPFabric #SpineLeaf #DatacenterNetworking #NetworkDesign #ECMP #BGP #InfrastructureEngineering #ITcare